Last time out we were discussing defining your build pipeline in a YAML file. That post was an intro to what pipelines are and the benefits of defining the tasks that it runs in a YAML file alongside your other source code. Now we’ll turn our attention to some Business Central specific considerations.

Objectives

We’re start by defining the key objectives of the build process:

- Download a particular version of the source AL code

- Create an appropriate Docker container to publish the app into and run the tests against (see below for more about what “appropriate” means in this context)

- Acquire the alc.exe compiler from the container and use it to compile the AL code into two apps (the main app and a dependent app that contains the tests)

- Acquire and install any necessary dependencies, install the main app and test app (see here)

- Execute the tests and export the results

- Upload test results, main app and test app to the build

- Remove the Docker container

Environment

Microsoft-hosted or self-hosted?

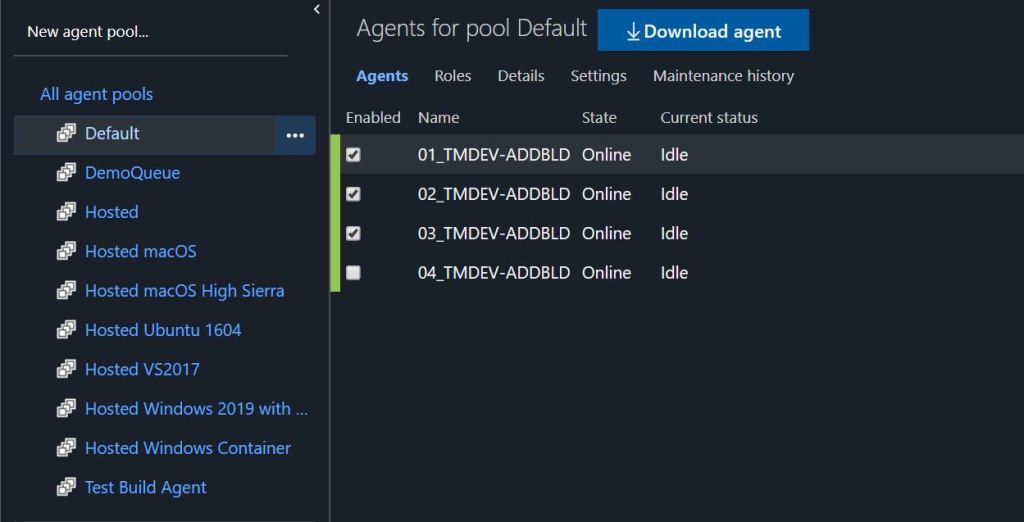

Microsoft give you a menu of different hosted build agents to execute your pipelines on and 1,800 minutes of build time per month for free. The obvious attraction of this option is not having to build and maintain your own infrastructure to run builds and you just pay for the time you use (assuming you exceed the free limit). The obvious downside is that you can’t prepare that environment as you’d like e.g. Docker images must be downloaded each time as part of the job. I can’t comment too much on this option as it isn’t something we’ve experimented with so far.

We host our own server that runs several build agents. The main driver for the decision at the time was that it allowed us to persist Docker images between builds (NAV images are approx. 15GB, although BC images are smaller) and save a substantial amount of time on each build.

With smaller Docker images these days it ought to be increasingly feasible to run BC builds in a sensible amount of time.

Installing and Connecting the Build Agent

From the list of build agents (at https://dev.azure.com/<your organisation>/_settings/agentpools) you’ll see the link to Download the agent. Simply download and extract onto your build server. Run config.cmd and follow the instructions to connect the agent to your DevOps organisation.

You’ll need a Personal Access Token to authenticate. See here if you need a refresher on how to create those.

Triggers

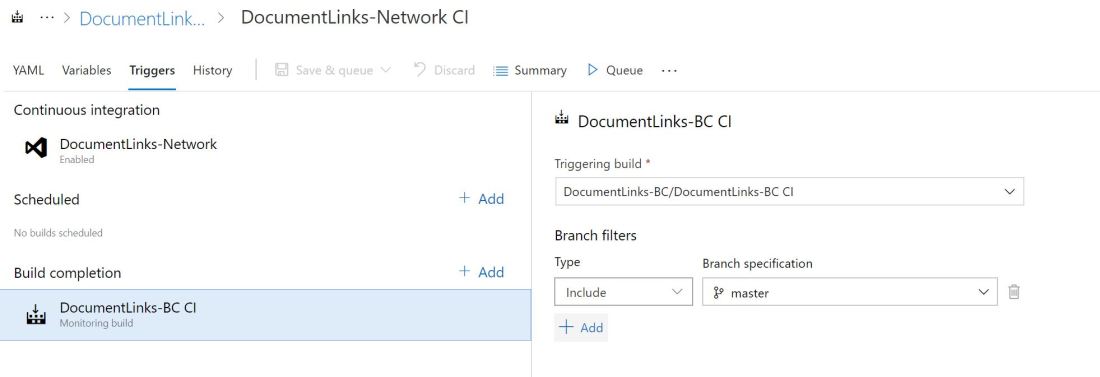

The majority of our builds are triggered by some new code being pushed to server branch i.e. continuous integration builds. DevOps handles downloading this version of the source code to the build agent to work on. This is defined by the trigger section of the .azue-pipelines.yml file:

trigger:

branches:

include:

- "*"

We are also starting to schedule more builds. This is useful for building our apps against insider builds of Business Central. Which brings me onto how we define the Docker image that we are going to build against.

Environment.json

Our apps include a json file that defines some parameters that are used by the build.

- The Docker image to build against

- The user name and password to create the new container with

- Translations (country and language) that must be present in the app

- Details of the Azure DevOps project/repo to acquire dependencies listed in app.json from (as described here)

Which Docker Image?

As a rule I develop and test against sandbox images (mcr.microsoft.com/businesscentral/sandbox). They are the closest thing to testing on SaaS that you can get without actually having a SaaS tenant. We always develop against the worldwide (W1) image and build against all of the localisations that we are planning to support.

The sandbox image has very little data in it, which is great for downloading new versions of the image and creating new containers but does mean that you have to handle more of the data setup in your tests than you would for an on-prem image. Yes, tests should be data-agnostic and run in an empty company but we still need to work around some bugs in standard library functions.

Branch per Docker Image

This approach allows us to have a separate branch for each different Docker image that we want to build our app against. We have country/xyz branches where “xyz” is the Docker tag for the localisation that we need to support i.e. country/es, country/ca, country/nz

At any moment these branches should be a single commit ahead of the feature branch we are working on, the only difference being the Docker image that is used. We can then rebase these branches on top of whichever commit we want to build. When we push those branches to the server continuous integration builds will be kicked off for each country.

PowerShell Tasks

It won’t come as much of a surprise that the majority of tasks performed by the build are PowerShell scripts. You’ve got some different options for defining these scripts:

- Define them in .ps1 files alongside your source code

- Define them in .ps1 files that are saved on the build server (assuming that you are self-hosting the agent)

- Maintain the scripts somewhere else and share them with the build server

We started with #2 and have recently moved onto #3. All our scripts are now bundled into a PowerShell module which is published on the PowerShell Gallery. The module is installed and updated on the build server. Maybe I’ll post some more about our approach to PowerShell development, our build process for it and testing with Pester another time.

We use inline PowerShell tasks to import our module and run a command on the source like this:

steps:

- task: PowerShell@1

displayName: 'Create packages and execute tests'

inputs:

scriptType: inlineScript

inlineScript: 'Import-Module Tecman.Tfs.Tools;Run-ALBuildProcess ''$(Build.SourcesDirectory)'' ''$(Build.ArtifactsStagingDirectory)'' $(Build.BuildID) $true'

Compiling the App

Acquiring the Compiler

If you’ve read the output from the creation of a new Docker container then you’ve probably noticed that the corresponding version of the Visual Studio Code extension is included with the container. It is hosted at http://<containername>:8080/<name of vsix file>. You can get the precise URL to the file by inspecting the logs with docker logs <container name>.

Use PowerShell’s Download-File function to download the vsix to a local file. The .vsix file, like a .app file, is a archive file containing the source of the extension. You can use Expand-Archive on the file to extract the contents of the .vsix to a local folder and find alc.exe in the extracted files. You’ll need to rename the file to .zip first to convince Expand-Archive that it is a format it can expand.

function Get-CompilerFromContainer

{

Param(

[Parameter(Mandatory=$true)]

[string]$ContainerName

)

$VsixPath = Get-VSCodeExtensionFromContainer -ContainerName $ContainerName

if (!(Test-path "$VsixPath\Extract")){

Rename-Item $VsixPath "$VsixPath.zip"

Create-EmptyDirectory "$VsixPath\Extract"

Expand-Archive -Path "$VsixPath.zip" -DestinationPath "$VsixPath\Extract"

}

"$VsixPath\Extract\extension\bin\alc.exe"

}

function Get-VSCodeExtensionFromContainer {

Param(

[Parameter(Mandatory=$false)]

[string]$ContainerName = (Get-ContainerFromLaunchJson)

)

$Logs = docker logs $ContainerName

$VsixUrl = $Logs.item($Logs.indexOf('Files:') + 1)

$VsixName = (Split-Path $VsixUrl -Leaf).TrimEnd('.vsix')

$VsixPath = Join-Path (Split-Path (Get-TFSConfigPath) -Parent) $VsixName

$VsixFile = (Join-Path -Path $VsixPath -ChildPath $VsixName) + '.vsix'

if (!(Test-Path $VsixPath)){

New-Item -Path $VsixPath -ItemType Directory

Download-File -sourceUrl $VsixUrl -destinationFile $VsixFile

}

$VsixFile

}

The above includes some code to save the extracted .vsix files into the AppData folder on the build server to save us downloading and extracting a version of the VS Code extensions that we’ve already got. Over time the .vsix file has grown in size and we can save ourselves some time and disk space by reusing the copy that we’ve already extracted.

Compiling

Having got your hands on the right version of alc.exe you can run it with something like the below:

Start-Process -FilePath $CompilerPath -ArgumentList (('/project:"{0}"' -f $SourcePath),('/packagecachepath:"{0}"' -f (Join-Path $SourcePath '.alpackages')),('/assemblyProbingPaths:"{0}"' -f (Join-Path $SourcePath '.netpackages'))) -Wait

Assuming the app builds successfully you’ll see a .app file in the root of the source directory. You can now grab that app file and publish it into the container using the navcontainerhelper module.

Testing and Uploading the Results

Having created a container, got the VS Code extension and published the app (with any dependencies) it’s time to run the tests. I’ve been writing about using navcontainerhelper to execute the tests in the container quite a lot lately so I won’t go into all that again.

Suffice to say that we use navcontainerhelper to execute the tests and export the results to XUnit format. We then use the “Publish test results” task to upload those results to the build on Azure DevOps.

- task: PowerShell@1

displayName: 'Error on test failure'

inputs:

scriptType: inlineScript

inlineScript: 'Import-Module Tecman.Tfs.Tools;Error-OnTestFailure $(Build.BuildID)'

You might notice Error-OnTestFailure in that inlineScript. The purpose of that is to throw an error if any of the tests fail otherwise the build will be reported as successful, even with failed tests. I suspect setting the AzureDevOps parameter on the Run-TestsInNavContainer function is the better way to do this now though.

Uploading the App(s)

If the tests have run successfully then we can upload the app files to the build artefacts. Simply copy the app files into the artefacts directory – defined by the $(Build.ArtifactsStagingDirectory) variable and run the Publish Build Artifacts task.

- task: PublishBuildArtifacts@1

displayName: 'Publish App Package'

inputs:

ArtifactName: 'App Package'

Removing the Docker Container

Finally we’re going to remove the Docker container with a inline PowerShell script. Notice the condition property that is attached to this task. In this case we’re just defining that the task should always be run – even if an earlier task has failed. It is possible to get smarter with conditions e.g. only running certain tasks if the build has been triggered in a certain way, or from a particular branch.

- task: PowerShell@1

displayName: 'Remove Docker build container'

inputs:

scriptType: inlineScript

inlineScript: 'Import-Module Tecman.Tfs.Tools;Remove-ALBuildContainer $(Build.BuildID)'

condition: always()

Writing Your Own YAML Pipeline

If you’re reading this post and wondering how on earth you are supposed to know what to type into your blank .azure-pipelines.yml file then remember that the Azure Pipelines extension for VS Code give you intellisense. Just create the file and hit Ctrl+Space to see the what’s what.

Conclusion

This post has been a bit of mixed bag, a rummage through our build pipeline toolkit, but hopefully some of it has been useful. As ever, the best way to learn is to get stuck in and try it out for yourself.