TL;DR

- Extract the package with 7-Zip

- Open the extracted file in a VS Code / Notepad++ / text-editor-of-choice

- Edit the xml as required

- Use 7-Zip to compress in gzip format

Editing Config Packages

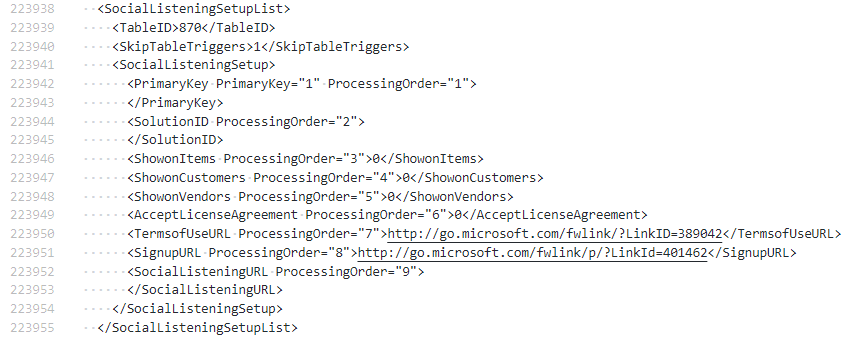

Sometimes you might want to edit a config package file without having to import and export a modified copy from BC. In my case I wanted to remove the Social Listening Setup table from the package. Microsoft have made this table obsolete and BC throws an error if I try to import the package with this table present. (Probably not a bad idea – stopping listening to socials).

Fortunately, a rapidstart file is just a compressed xml file. Extract the rapidstart file with 7-Zip and then open the extracted file in a text editor. The format of the file is pretty straight forward. Each table is represented with an XYZList node where XYZ is the name of the table which the table-level settings followed by one or more XYZ nodes with the data.

Here are two records for the Payment Terms table.

<PaymentTermsList>

<TableID>3</TableID>

<PageID>4</PageID>

<SkipTableTriggers>1</SkipTableTriggers>

<PaymentTerms>

<Code PrimaryKey="1" ProcessingOrder="1">10 DAYS</Code>

<DueDateCalculation ProcessingOrder="2"><10D></DueDateCalculation>

<DiscountDateCalculation ProcessingOrder="3">

</DiscountDateCalculation>

<Discount ProcessingOrder="4">0</Discount>

<Description ProcessingOrder="5">Net 10 days</Description>

<CalcPmtDisconCrMemos ProcessingOrder="6">0</CalcPmtDisconCrMemos>

<LastModifiedDateTime ProcessingOrder="7">

</LastModifiedDateTime>

<Id ProcessingOrder="8">{6BD87497-B233-EB11-8E89-E8FD151D8C93}</Id>

</PaymentTerms>

<PaymentTerms>

<Code>14 DAYS</Code>

<DueDateCalculation><14D></DueDateCalculation>

<DiscountDateCalculation>

</DiscountDateCalculation>

<Discount>0</Discount>

<Description>Net 14 days</Description>

<CalcPmtDisconCrMemos>0</CalcPmtDisconCrMemos>

<LastModifiedDateTime>

</LastModifiedDateTime>

<Id>{6DD87497-B233-EB11-8E89-E8FD151D8C93}</Id>

</PaymentTerms>

</PaymentTermsList>All I need to do is find the offending Social Listening Setup node in my file and remove it. Here it is:

Once you are finished editing you can use 7-Zip to compress the file again with the gzip method and import.