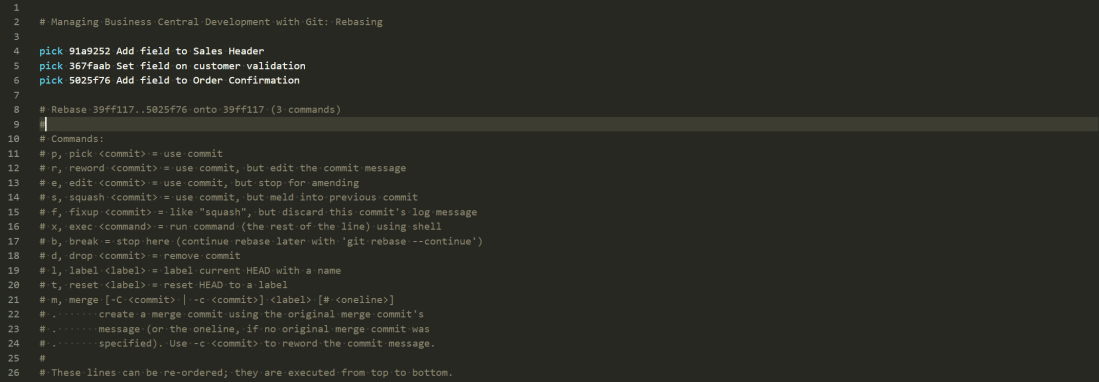

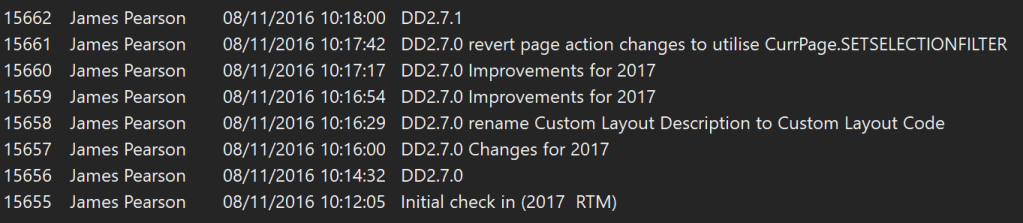

The last few posts have been about manipulating the history of your Git repository, getting comfortable tools like rebase, reset, cherry-pick and commit –amend. That’s all geared towards trying to create a history which is more than just a record of stuff that happened but tells a story of the development of your app that is useful for your colleagues and your future self.

This post is on the same theme but we’re talking about your branching strategy. Remember one of the strengths of Git is how easy it is to create branches to isolate pieces of development from each other. That’s an awesome tool – but how do we make best use of it?

When is it useful to separate pieces of development from each other in different branches? How and when do you stick the pieces of the jigsaw back together again?

Options

As you’d expect there are a lot of different approaches and no shortage of people online supporting each one. Here are some popular options. I won’t attempt to critique them because we haven’t tried them all and because you can read, try them out for yourselves and form your own opinions.

Git Flow

https://nvie.com/posts/a-successful-git-branching-model/

This approach has a “develop” branch alongside master and feature branches which are used to manage the work in progress before they are merged back to master only when they are ready to be released.

GitHub Flow

https://guides.github.com/introduction/flow/

As with Git Flow, work in progress changes are isolated in their own branches. Unlike Git Flow they are merged directly back into master once they have been reviewed and are ready to go.

Trunk Based Development

https://trunkbaseddevelopment.com/

The key idea is to avoid having long-lived branches other than the trunk (master) branch. Development can be done against other branches but only to facilitate code review and discussion. Changes should be committed to master at least every 24 hours.

Considerations

As before adopting any tool or practice we need to think about our particular circumstances and needs. What are we actually trying to achieve? By all means read about what other people are doing. If you keep reading I’ll share what we’re (currently) doing but you should think about your own requirements, decide on something that makes sense for you and be prepared to improve it in future.

I think there is something to learn from each of the strategies I’ve linked to.

App Development

We are developing apps for Business Central either to be deployed via AppSource or installed through our partners on-premise to their customers. Either way, making a new version of our app available to our customers is not a trivial exercise.

When we submit a new version of our app it is typically at least 3 or 4 working days until it is available in AppSource. For on-prem customers we are reliant on our partners to upgrade the apps manually. Neither of these scenarios exactly falls into the ideal “continuous deployment” category. Some branching strategies are geared towards getting code into master as soon as possible so it can be pushed to the production environment each day, or even multiple times a day.

However attractive that might sound that is just isn’t reality for us – at least not yet. We’re due to be getting an API for pushing updates to AppSource, which is great, but as long as it is backed by a manual certification process I can’t see Microsoft thanking us for pushing multiple updates each day.

Given the lead time to getting a release live we should be quite careful about what is going to go into each one. We don’t really have the luxury of pushing an update immediately after another because we forgot to include something.

#1 Create a Release Branch

We start by creating a release branch. This is where we are going to collect all the changes that should be included in the next release before they are merged into the master branch. We do occasionally bundle in last minute changes and fixes to a release but we ought to have a pretty clear idea of what the release will include before we start.

Imagine we’ve got this repo. All of the commits are merged into the master branch which is tagged with 1.0.0. Tags are useful additional pointers to particular places in the history of the repo. In future if we want to see the code as it was in v1.0.0 we can just run git checkout 1.0.0

* 3894d1a (HEAD -> master, tag: 1.0.0, origin/master) Correct typo in caption * cd03362 Add missing caption for new field * 94388de Populate new Customer field OnInsert * c49b9c9 Add new field to Customer card

Now create a new branch to use as our release branch. For now this just points to the same commit as master.

git branch release/1.1.0

#2 Create Individual Feature and Bug Branches

Now we’ll create separate branches for each feature or bug fix that we’ve decided to include in release 1.1.0. Why not just do all the changes we need in the release branch? Because we want to be able to develop and test them separately from each other.

* 381c83d (HEAD -> bug/commission-calc) Fix rounding error in commission calc | * e9d31b4 (feature/sales-report) Action to open sales report from customer | * 78102dd Sales report |/ | * c450814 (feature/sales-price-calc) Prices in non-base UOM | * dd5f6c0 Prices in additional currencies | * 02fa619 Pricing elements per item |/ * 3894d1a (tag: 1.0.0, origin/master, release/1.1.0, master) Correct typo in caption * cd03362 Add missing caption for new field * 94388de Populate new Customer field OnInsert * c49b9c9 Add new field to Customer card

The graph might look something like this now. Separate branches with one or more commits in each. Incidentally, naming the branches feature/* and bug/* is just a convention – it doesn’t have any affect on how they are managed.

#3 Create Pull Requests and Complete Quickly

When each feature or bug fix is ready for review and testing we create a pull request targeting the release branch. Pull requests in Azure DevOps are great. However, in my experience there are two main things that make pull requests less great, or even bad.

- Bundling too many changes in a single pull request

- Leaving them open for too long

Having lots of changes makes it difficult to review and test those changes. Which means no one is enthusiastic to do it. Which means it gets left open for a long time.

Leaving pull requests open for a long time means people forget what the changes were for and whether they have already been tested. It becomes a burden that no one wants to take responsibility for. Eventually someone completes it because we’re all sick of seeing it on the list. Not an ideal reason to complete it.

We’ve got a couple of measures on our team dashboard – number of open pull requests and average age of those requests in days. If the average age is creeping over 7, say, then we’re likely doing something wrong.

We squash the commits when the pull request is completed. Like it sounds, that squashes all of the changes that are in the feature or bug branch into a single commit which is added to the release branch. We lose some of the history doing this but I think it makes it more readable later on. We are rarely interested in the details of how we wrote a certain feature – just that we did, and these were the changes that we made.

* 35cf673 (HEAD -> release/1.1.0) Merged PR 03: Commission Calc * b23b8c5 Merged PR 02: Sales Report * 8007dcf Merged PR 01: Sales Price Calc | * 381c83d (bug/commission-calc) Fix rounding error in commission calc |/ | * e9d31b4 (feature/sales-report) Action to open sales report from customer | * 78102dd Sales report |/ | * c450814 (feature/sales-price-calc) Prices in non-base UOM | * dd5f6c0 Prices in additional currencies | * 02fa619 Pricing elements per item |/ * 3894d1a (tag: 1.0.0, origin/master, master) Correct typo in caption * cd03362 Add missing caption for new field * 94388de Populate new Customer field OnInsert * c49b9c9 Add new field to Customer card

Here is the graph now. I’ve removed the remote branches to keep it simpler. Notice the “Merged PR” commits which have been created by completing the pull requests. I’ve still got local branches with the individual changes. These can now safely be deleted now that those changes have been squashed into the release branch.

#4 Merge into Master and Tag

Each push to the server triggers a pipeline to compile the code and run the tests. Assuming those builds are passing and with the manual testing that we’ve done we ought to be confident that the changes work as expected. Each time we complete a pull request it runs a build incorporating the other completed changes. If that passes as well then we’re ready to merge the changes into master, delete the release branch and tag the new version as 1.1.0

* 35cf673 (HEAD -> master, origin/master, tag: 1.1.0) Merged PR 03: Commission Calc * b23b8c5 Merged PR 02: Sales Report * 8007dcf Merged PR 01: Sales Price Calc * 3894d1a (tag: 1.0.0) Correct typo in caption * cd03362 Add missing caption for new field * 94388de Populate new Customer field OnInsert * c49b9c9 Add new field to Customer card

The end result – at least what we’re aiming for – is a neat summary of the changes that have been made between the two versions. We can see the changes which we made for each feature or bug fix in those commits. If we want more detail we can always go back and view the completed pull request on Azure DevOps.

In a future post we’ll think about how to manage different versions of the code for different versions of Business Central.

Further Reading

Check out Michael Megel’s post on the same topic here: https://never-stop-learning.de/branching-workflow-ci-cd-part-6/